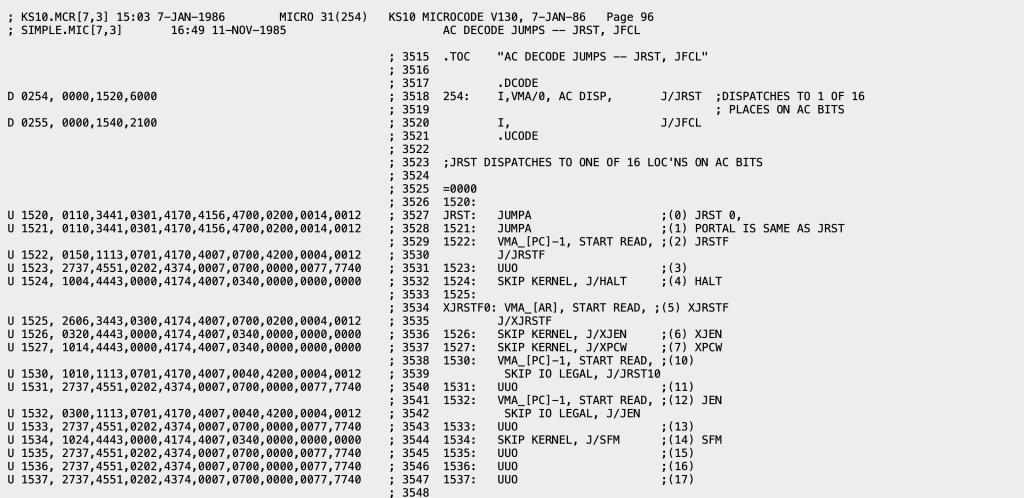

I’ve been thinking about this post for a long time, but using it to kick off 2026 just seems super appropriate. I have an unusually high percentage of readers who do know how to program a computer, but most of you don’t. You’ve never written a device driver. Fewer still in machine language. Speaking of machine language, you never wrote something (outside of perhaps a school project) in assembly language. You don’t know how to write code that lives in kernel space, and how to manage the transitions. And manually scheduling instructions for a RISC microprocessor? Nope, you haven’t done it. In reality, 98% of the developers of the world don’t even know what I’m talking about. Or how about this? How many developers even know what this is?

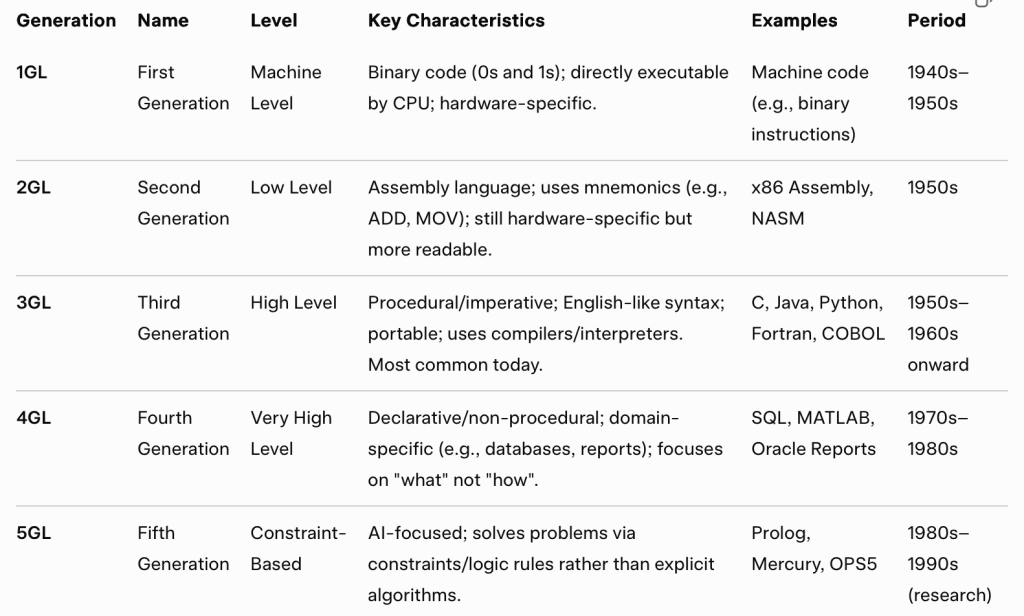

I’m not trying to pick on developers, I’m trying to make the point that from the earliest days of computing we’ve been adding abstractions to make it easier for developers to focus on solving a problem not manipulating the computer directly. By the 1970s, programming was moving up the abstraction stack in a big way. Some of that is reflected in the progress we were making in programming languages.

It wasn’t just the languages changing, it was the run-time libraries. We came into the 1970s with the 3GL run-time libraries having very basic support for their programming languages. Simple math and string manipulation functions primarily. We ended the 1970s with the VAX (nee VMS) Common Run-Time Library offering a rich cross-language set of capabilities, including abstractions for accessing all kinds of services. It was basically the forerunner of what was done in .NET1 in the 2000s.

It wasn’t just languages and run-times we saw changing the landscape. We saw tremenous growth in tooling. Integrated Development Environments (IDE) like Visual Studio started partially generating applications for us, at least providing a template so we didn’t have to start every project with a blank sheet of paper. Rich sample applications appeared. Sites like Experts Exchange and StackOverflow appeared, giving developers access to more examples and code fragments, plus expert advice. Codeplex and then Github, became repositories of all kinds of reusable code. Search engines, particularly Google, became the go to tool for finding code or libraries you could reuse for any project.

In the 2010s no one was writing applications from scratch, they were using 3rd party libraries layered on other 3rd party libraries, and using source code picked up off of Github, to construct their application. Application development was largely a wiring problem. Even traditionally lower level programming moved into this mode. You don’t write a compiler backend, you use LLVM. And you use tools like ANTLR or Flex+Bison to create the compiler front-end.

The broader Information Technology space was also struggling in the 1970s with the “Application Backlog”. As computing grew programmers just couldn’t keep up with application demand. The business people would ask for a new report and be told there was a one year wait before the programming staff could get around to it. Report Writers and query tools like RAMIS, FOCUS, and Datatrieve were created, both to increase programmer productivity but also to give non-programmers a way to meet some of their own application development needs. These were the early 4th Generation Languages. They were followed by an ever increasing flow of Rapid Application Development tools. At DEC in the late 1980s we did TEAMDATA and RALLY2.

4GLs and Rapid Application Development really took off in the PC world. Who can forget Crystal Reports? Microsoft Access became the bane of IT department’s existence as users developed their own apps and, more to IT’s problem, deployed them across organizations! Spreadsheets, and particularly Microsoft Excel, rules the application world. At one point at Microsoft we concluded there was more data stored in Excel spreadsheets than all the data in databases3. Then up until recently NoCode/LowCode, often based off the spreadsheet model, was all the rage.

And then there is “The Web”, and HTML, and JavaScript, and the myriad of tools that were created to make both client and backend website development easy. We even ended up with new categories of developers, Web Developers and Full Stack Developers, as a result. We even have a caste system, with Software Engineers generally deriding Web Developers4.

The funny thing is that despite this enormous effort to make development something anyone could do, thoughout this century we’ve seen an explosion of growth in Software Engineers. It takes a lot of people, with a lot of expertise, to build things for hyperscale. The amount of distributed systems expertise at places like AWS is just staggering. But what those people do is make it so 98% of developers out there can build distributed systems without understanding distributed systems.

Which gets us to the main point of this long blog entry. The main thing that those of us working in the software industry have focused on since the 1950s, with ever accelerating effort, is to eliminate (or at least dramatically reduce) the need for computer programming. We’ve made it so only a tiny fraction of those developing applications actually know how to program a computer. We’ve made it so most can achieve 80% of what they are building using existing libraries, code bases, and services. We’ve made some classes of applications, for example spreadsheets, not seem like programming at all. But in a broad sense we haven’t eliminated programmers. Will Generative AI finally be the tool that eliminates most need for programming? Or, like our other efforts, actually fuel more development just at yet another a higher level of abstraction. In any case, it is hard for me to get bent out of shape over Generative AI replacing classic programming. That’s been our goal all along.

Epilogue: One of the fun things I did when I was still in the early stage of my career was write a lock manager for a database system. I had no formal education. There was no Internet, so no way to easily research. There was no code to model on. So it was a great example to play with Generative AI. I asked Grok to write me a lock manager. Looked good. I asked for some features often mentioned, but rarely used, in lock managers. It added those. I asked it to make it distributed. Then I started asking it to make optimizations. I got excited about what it did. The interesting part was I had to ask for the features and for the optimizations. I still created the lock manager, just at a different level of abstraction. I had to express what I wanted, and do it clearly. Coding a lock manager in the 1970s was fun. Being on the periphery of distributed lock manager development in the 1980s was also fun. Thinking about coding a new one in the 2020s? Boring. I could get excited about creating a new database system, but a lot of the pieces I just want to make happen, not get into the weeds of every individual piece. I’d be happy to let GenAI do that for me.

- In 1994, one of David Vaskevitch’s roles at Microsoft was CTO of Developer Division. As he came out of a DevDiv staff meeting I asked what they had talked about. He described that he was pushing for a common run-time across the languages, and from the description I pointed out that it sounded just like the VAX Common Run-Time. David was later the Sr. VP of DevDiv during development of .NET. ↩︎

- These sadly did not survive DEC’s sale of its database business to Oracle. Datatrieve is actually still alive, maintained and sold by VMS Software Inc. ↩︎

- That was before the “Big Data” era ↩︎

- In the 1960s-70s there was a similar caste system, Application Programmers and System Programmers. ↩︎

Update (1/4): Since some people are misreading this post (not a surprise when 10s of thousands of people have read it) so let me clarify. I’m not picking on developers at any level here. I’m not suggesting that we go back to writing everything (or even anything) in assembler. I’m pointing out that we keep adding abstractions to make it easier for both professional programmers to be more productive AND for those who are not programmers to solve their own problems without requiring the help of a professional programmer. And that’s how we should look at AI. For some of us it is a new tool for helping us write apps. For others, it is a means to not have to write an app directly at all. It is possible this means fewer jobs for professional programmers going forward. More likely it means there is greater demand for analysis and high level design skills, less demand for coding skills. In any case, we need to embrace AI not fight it.

Regarding footnote 4, when I was at school in the early 80s in the UK I was interested in becoming a computer programmer. The only careers direction I had was to choose between an application or systems programmer. So that distinction persisted. I went to University in 1984, studied Maths/Comp Sci. I got a job in 1988 doing Pascal, and then RALLY in the early 90s.

table generated with grok – do you at least sometimes check what LLM spits out? the periods are way off, some languages were developed way later. Are you even a developer?

No generation ever ends so I saw no reason to nitpick over the examples not meeting the dates for the emergence of that generation.

Wow! I didnt start programming until the 80s, but this brings back some pretty old (and not too happy) memories.

Ive done ALL those things, including with old Amdahl/IBM 360 machines (with CMS & MTS and *punch* *cards*) as well as DEC/PDP-11 and VAX/VMS (yikes).

Except for punch cards those are happy memories for me. Actually I take even the punch card comment back. I have some very happy experiences with those too,

The clickbaity headline wants to say, you aren’t a programmer until you know the instruction set of a processor and program in assembly language. If you job is high level application development, and you have forgot what you did in your microprocessor class, well, you don’t know how to program.

Actually what the headline wants to say is those complaining about AI killing traditional programing don’t realize that it just means we are getting closer to the end state the industry has been working towards all along.

it sounds so but the author has clarified that

“98% of the programmers don’t know how to write low level software. ”

Ok, but writing low level software is 2% of the job market. So, what’s wrong?

When I was studying computer science in 1986, I learned who to write assembly language, forth, basic… and to develop hardware and the associated software.

I used this knowledge for a few years and then switched to C and then Database Admin (I am very good at writing fast and efficient PL/SQL code).

Why? Better pay, more job.

The issue with using LLMs as an interface, is that they are a horrible abstraction. They do not provide the same output for the same input, they produce pseudo-human-readable code, instead of machine code, and worst of all, they are not formally verifiable, or even testable.

This is not an abstraction in the same way as the others in the stack. As code needs to implement formal requirements / formally described functionalities, the need to actually boil down a requirement to a formal language is actually a huge benefit. Throwing that away is the abstraction that someone that doesn’t understand how software is made would promote, as on their management abstraction layer, they’re used to delivering at a high business abstraction layer, without worrying about what the actual process is, that should be out in place.

And this differs from Microsoft Access how? Or Excel? Or Rules Engines? Or NoCode tools? You believe that someone wrote formal specifications before they implemented their spreadsheet? Or that they had the skills to fully understand how they got to the final result?

But for more formal applications I do think that you need someone who guides the AI and verifies it is doing as asked. The VC I know building a Due Diligence system didn’t tell a GenAI tool “Create a DD System”. They are carefully guiding its construction. They tested various questions against different LLMs to see which was best at what. They weave together the results from the different tools. They re-test on new releases, or availability of completely new models. It’s very much a software development effort, it just doesn’t require an army of coders. I think it is one person doing the “coding” and one person doing the specification, with the other partners critiquing and providing feedback to test it. It would take a small army to do this in a 3GL, and they’d get it wrong more often then not because they would be software developers not financial experts.

As I alluded to in my epilogue, in the end I had to carefully guide Grok to get the lock manager I wanted. The good news is that it worked well incrementally, rewriting the code each time. But if I was going to do it for a real project? I’d write a spec in the form of directives I wanted to give it to describe the lock manager first. And I’d think carefully about the precision of my questions to minimize the potential of it going off the rails. Which makes me think of Microsoft in the 1990s. Technical Program Managers, engineers in every sense except (in many cases) they didn’t meet our coding bar, would write specs and developers would implement them. Sometimes the PMs would even prototype what they wanted in Visual Basic and then the SDEs would re-implement it in C. I was talking to the Excel SDE who implemented Pivot Tables once and he said he never really understood pivot tables, he just implemented what the PM spec’d. This is little different than where we are going with AI, for applications that deserve formal specification.

Still don’t get the purpose of this article

Weird flex and a clickbait.

My career started in the 80s. The first job was 6502 assembly on Apple ][, Atari, and Commodore. For obvious reasons, everyone knew the computer inside and out.

As time marched on, worked on minis and main-frames. There always seemed to be a divide between developers that could write a compiler and those that treated it like a magic box. The ones that understood the compiler always made better debugger. They almost instinctively knew where things went south.

Worked on a major project where the person who was the “expert” in a highly customized scripting environment clearly had never taken compiler theory. Shortly after the first lesson on how to use the scripting language, he was let go (for lots of reasons). The only reason they hadn’t fired him earlier was they were afraid no one else could pick up the custom scripting environment. I’m confident nearly everyone I graduated with would have understood it.

Projects need at least one person who understands the system soup to nuts. They generally need several junior developers who can turn the crank and get results. During reviews, the soup to nuts dev needs to be listened to.

AI? Another level of junior developer. But with all junior developers, you hope they get more proficient over time.

I started programming when I was a teen in the 1970s. I have written kernel level code in MVS (a RACF exit ). I modified an OS/2 keyboard device driver for a kiosk. I have coded in multiple assembler languages over the years.

This morning I was using Gemini to assist with Angular and Java coding, since I was in an area new to me.

I have seen a lot of change. It is still a blast to code

If it wasn’t fun we’d all welcome our AI overlords with relief if not joy! 😆

yes I have used assembly language. It is a programming language. so are java or JavaScript. Anyone using any of those three languages is ‘programing a computer’. Anyone using any of those three languages is using someone else’s libraries to get stuff done. By your narrow minded definition only people who write bios code are ‘programming a computer’ and maybe not even them, maybe it is the intel engineers who write the CPU micro code?

the idea tha assembly language is the only ‘true’ programming language makes me think you don’t actually know how it works. When you write assembly or JavaScript you are equally ‘programming a computer’ and in both cases you are depending on other people’s code to get stuff done. Assembly uses the operating system and the bios and the CPU micro code

Actually you are programming varying levels of abstraction. Most programmers don’t know the lower levels and don’t see “the computer”, which is the point.

Those of us who have worked on operating systems on non-microcoded machines (or written microcode) have indeed programmed to true bare metal.

My point was that the ‘levels of abstraction’ go a least two levels below assembly language, something the writer doesn’t appear to know. By his definition almost nobody in the history of computing was ever a programmer or at least did not know how to ‘programmer a computer’. When you worked on your non-microcoded machines or wrote microcode, did you do it in binary, or did you write the compiler yourself? Are you sure you did not in any way depend on someone else’s code to make it work? Did you write the code to put your code onto the computer/micro-controller?

You’re just being silly. And completely missing the point.

No, I am pointing out that the writer was being silly. He was essentially saying that ‘most developers could not build a computer from scratch’ and he would be correct. Himself included. The stupid bit was that he thought that assembly language was the lowest level. It isn’t.

Yes, AI can be thought of as another abstraction layer on top of programming languages, but there are some major differences between AI to code and a high level language to a lower level language. AI as it stands today can be used in several distinct ways:

1. As auto complete (very useful)

2. As a search engine (very useful)

3. For vibe coding: really only works for very specific use cases (yes, I have vibe coded a small app and it worked).

But it is NOT a programming language.

And at the present point in time vibe coding won’t work for anything beyond very basic use cases. There have been attempts in the past to design programming languages more like English and AI vibe coding is an attempt to go one step further. However, the reason programming languages exist is we usually need some amount of exactness in our programs and at present AI just doesn’t cut it.

That’s (a) not what I’m saying and (b) I’ve done microcode

oh do I miss the days we did this in the early 80’s

using imsai, altair, cpm assembly: https://youtu.be/2y5oVHNfbf8?si=bqHbs5RVYRnbVahZ

now I use what I learned those days for art.

i was writing 6800 and 6502 in 1980 when I was 10, does that keep me out of the 98 percent?

Yes. Not that I was trying to classify people. More pointing out the growing levels of abstraction and that we shouldn’t fear another level of abstraction.

This pretty much describes my software engineering journey, starting with keypunching Fortran statements and then writing PDP-10 assembler. With a side diversion to hacking KL10 microcode so I appreciate the code snippet at the beginning of the article.

Hi Rich!

When I was grabbing that snippet I noticed that Don Dossa had done some of the later microcode work on the KS10. I can’t recall if you knew Don. Don and I were in TOPS-10 support and decided we wanted to learn about microcoding, so took a class taught by a Raytheon Fellow (I can’t remember actual title back then, but whatever the most senior technical level at Raytheon). I got dragged into databases shortly thereafter, but Don went to work on Jupiter microcoding the I/O processor. After Jupiter was cancelled he did some other microcode work, which I hadn’t realized (or I’d forgotten).

Hi Hal,

My first run-in with KL microcode was about a month after I started with DEC. I was in the TOPS-20 monitor internals course which spent a lot of time on the virtual memory system. I was curious about the PMAP system call which allowed you to remap a page of your user memory space to another page.

I wondered what happened if you mapped a page onto itself. The page just disappeared from your memory space.

My inner hacker wanted to know what happened when the page that you mapped onto itself was the page containing the instruction currently being executed.

Instant crash. I think this was on the dev machine in MR (KL2102?)

Not an easy fix, I recall.

Very cool.

KL2102 was the TOPS-20 dev machine

As a 70s Computer Engineering graduate, and subsequent life long developer, my income was increasingly derived from fixing the problems of developers less and less aware of programming, constructs, logic and simply plugging a system together.

That’s fine, but your update 1/4 hits a nail on the head. “More likely it means there is greater demand for analysis and high level design skills, less demand for coding skills.” Sadly, there is less and less analysis, less and less specification, analysis or documentation. On that last matter, there exists an attitude of ‘if it goes wrong, there’s no need to look back at why, just do it from scratch again until it meets the expected need’ (itself not often the actual need).

I guess that the question is really about what is being developed. This article assumes that Developers develop code (which is reasonable), but I submit that Developers (all types, even such as Real Estate developers) develop solutions, and that code is just one type of tool for expressing a solution.

In 1977, when I was in high school, I came across Rodney Zaks’ book “Programming the Z80”, and this set my career. I found that I could ‘see’ what the CPU was doing ( i.e. the contents of the registers and the effect of instructions upon them) and easily imagine code that would achieve desired outcomes.

Impressive for a fifteen year old, but not very useful in today’s world, where I am more likely to hire the talent to write the code, once I have designed the solution.

In conclusion, writing in assembly language is a good skill to have in your past (unless you work for a company like Intel, AMD, or Netgear), but Developers develop solutions, not code, so it is nice if they “speak” assembly language, but much more important that they can speak to their human clients.

That was exactly my point. The post was aimed at all those freaking out about AI taking away programming jobs. But we’ve been steadily moving application development upstream from bits, and bytes, and instructions from the moment we had digital computers. We are just on another step. One big enough to be above the trend line.